The Evolution of RAG: How Retrieval-Augmented Generation is Transforming Enterprise AI in 2025

Retrieval-Augmented Generation (RAG) has emerged as one of the most significant breakthroughs in artificial intelligence, fundamentally changing how we approach knowledge-intensive tasks. As we progress through 2025, RAG continues to evolve, offering unprecedented capabilities that bridge the gap between static AI models and dynamic, real-world information.

What Makes RAG Revolutionary?

RAG represents a paradigm shift from traditional language models by combining the generative power of large language models (LLMs) with real-time information retrieval. Instead of relying solely on training data, RAG systems can access external knowledge sources, databases, and document stores to provide accurate, up-to-date responses.

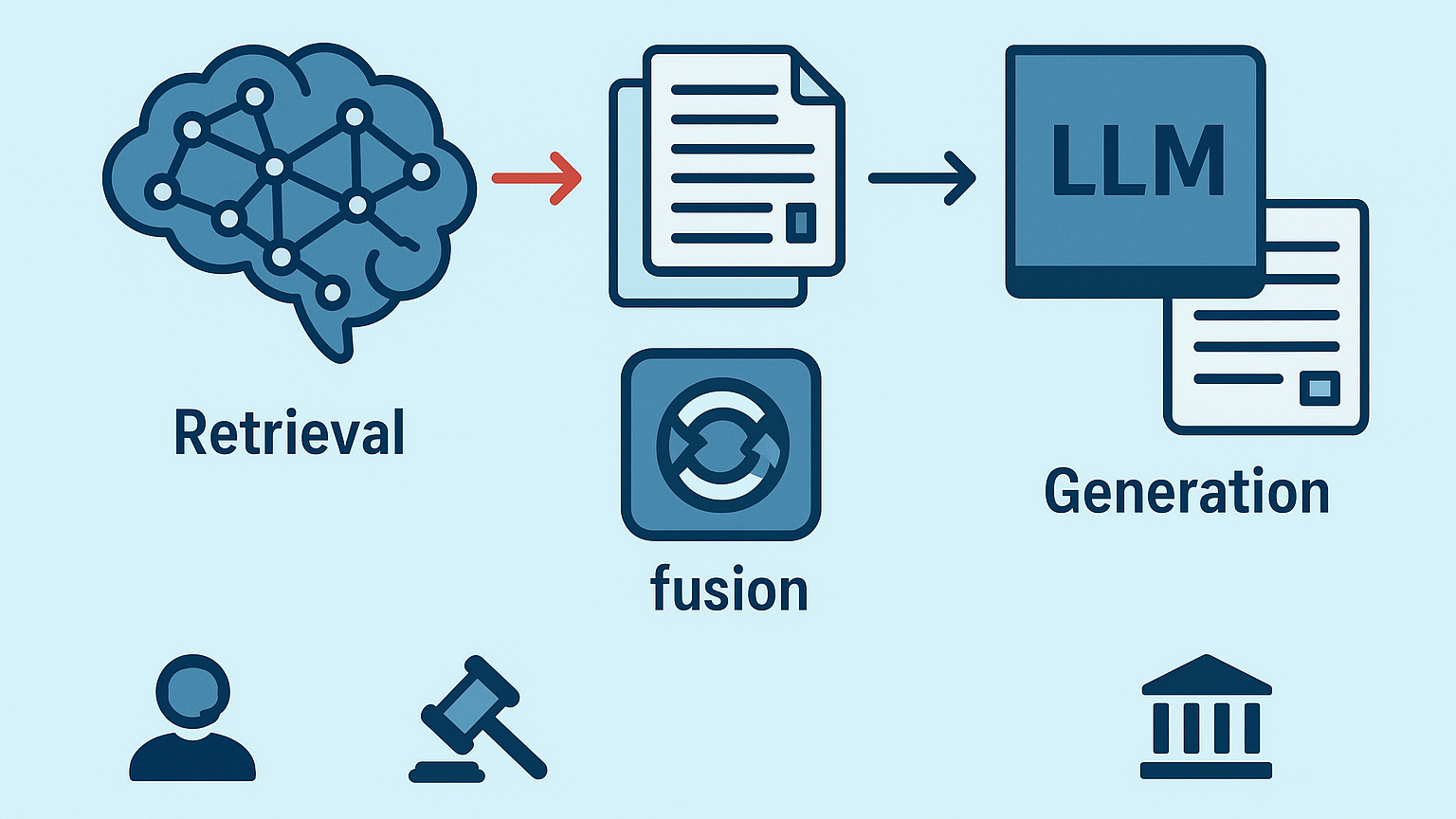

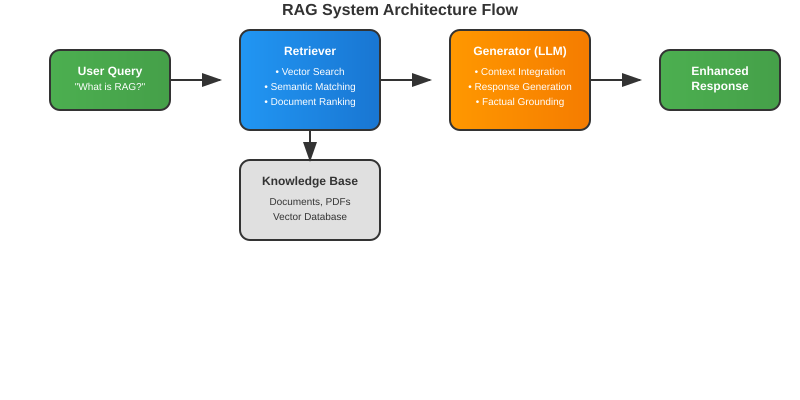

The core architecture consists of three essential components:

- Retrievers: Systems that fetch relevant information from external sources

- Fusion techniques: Methods that combine retrieved information with model knowledge

- Generators: LLMs that produce contextually relevant responses

Key Trends Shaping RAG in 2025

1. Enterprise-Scale Implementation

Organizations are moving beyond experimental RAG deployments to production-ready systems. The focus has shifted to:

- Scalable vector database architectures

- Real-time knowledge base synchronization

- Multi-domain knowledge integration

- Enterprise security and compliance frameworks

2. Advanced Evaluation Methodologies

The SIGIR 2025 LiveRAG Challenge highlighted the importance of robust evaluation frameworks. Current developments include:

- Automated LLM-based evaluation systems

- Faithfulness and correctness metrics

- Subset sampling evaluation methods (SPEAR)

- Comparative benchmarking across retrieval strategies

3. Hybrid Retrieval Approaches

Modern RAG systems are adopting sophisticated retrieval mechanisms:

- Dense and sparse retrieval combination

- Multi-vector representations

- Contextual embedding techniques

- Dynamic retrieval strategy selection

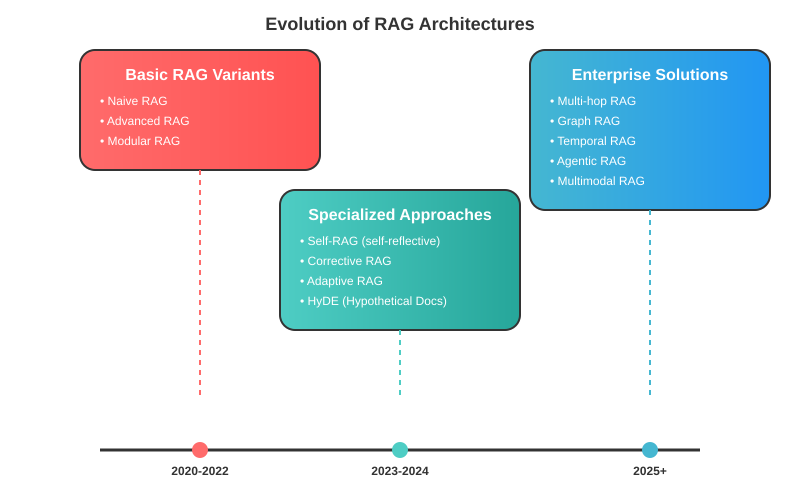

RAG Evolution Timeline

Basic RAG Variants:

- Naive RAG

- Advanced RAG

- Modular RAG

Specialized Approaches:

- Self-RAG (self-reflective retrieval)

- Corrective RAG (error correction mechanisms)

- Adaptive RAG (dynamic strategy selection)

- HyDE (Hypothetical Document Embeddings)

Enterprise-Focused Solutions:

- Multi-hop RAG (complex reasoning chains)

- Graph RAG (knowledge graph integration)

- Temporal RAG (time-aware retrieval)

Real-World Applications Driving Adoption

Customer Support Revolution

RAG-powered chatbots now provide accurate, contextual responses by accessing real-time product documentation, FAQ databases, and support ticket histories.

Legal and Compliance

Law firms leverage RAG to query vast legal databases, ensuring responses are grounded in current regulations and case law.

Healthcare Information Systems

Medical professionals use RAG to access the latest research papers, drug interactions, and treatment protocols while maintaining patient privacy.

Financial Services

Investment firms employ RAG for real-time market analysis, combining historical data with current market conditions for informed decision-making.

Technical Challenges and Solutions

Information Freshness

Challenge: Keeping retrieved information current and relevant. Solution: Implementing real-time indexing and cache invalidation strategies.

Retrieval Quality

Challenge: Ensuring retrieved documents are contextually relevant. Solution: Advanced embedding models and multi-stage retrieval pipelines.

Scalability

Challenge: Handling enterprise-scale document collections. Solution: Distributed vector databases and efficient indexing algorithms.

Implementation Best Practices for 2025

1. Start with Clear Use Cases

Define specific problems RAG will solve before implementation.

2. Invest in Quality Data

Ensure your knowledge base is well-structured, current, and comprehensive.

3. Choose the Right Vector Database

Select solutions that scale with your data volume and query patterns.

4. Implement Robust Evaluation

Establish metrics for retrieval quality, response accuracy, and system performance.

5. Plan for Continuous Learning

Design systems that improve through user feedback and performance monitoring.

Additional Resources

For deeper technical insights into RAG architectures and vector database selection, consider exploring:

- Multimodal RAG Implementation:

- Vector Database Comparison:

- Enterprise RAG Patterns:

Conclusion

RAG represents more than just a technical advancement: it’s a fundamental shift toward more intelligent, contextual AI systems. As we move through 2025, organizations that successfully implement RAG will gain significant competitive advantages through improved decision-making, enhanced customer experiences, and more efficient knowledge management.

The key to success lies not just in adopting RAG technology, but in understanding how to integrate it effectively with existing systems and workflows. As the technology continues to mature, we can expect even more sophisticated applications that blur the line between artificial and human intelligence.

The RAG revolution is just beginning, and 2025 promises to be the year when this technology truly transforms how we interact with information and knowledge.

This blog post explores the latest developments in Retrieval-Augmented Generation based on current industry trends and research findings. For organizations considering RAG implementation, consulting with AI specialists and conducting pilot projects can help determine the best approach for specific use cases.